Securing the Perimeter from Within: AI’s Role in Battling Insider Threats

- Aug 21, 2025

- 5 min read

Let’s face it - firewalls, endpoint detection systems, and zero-trust architectures have come a long way. But there’s one threat that continues to slip through the cracks. It’s not some hooded hacker in a dark basement or a rogue nation-state group hammering away from across the globe. It’s someone already inside. A trusted user. Maybe even someone on your team.

Insider threats don’t always come with malicious intent. Sure, some insiders are deliberate saboteurs. But others? They’re well-meaning employees who unknowingly expose sensitive data through sloppy habits, phishing bait, or misconfigured cloud settings. Intent doesn’t always matter when the damage is done.

Now here’s where AI rolls up its sleeves.

Artificial intelligence isn’t about replacing your security team. It’s about giving them eyes in the back of their head. And ears. And pattern recognition on steroids. When deployed with care and purpose, AI can detect subtle behavioral shifts, sniff out privilege misuse, and flag high-risk anomalies faster than traditional rule-based systems ever could.

Why Insider Threats Keep CISOs Up at Night

Insiders are already past the gates. They’ve got credentials, know how things work, and can exploit their access without triggering the usual alerts.

You can have Multi-Factor Authentication (MFA), strict access control, and encrypted pipelines. Still, if an employee downloads gigabytes of customer data at 2 AM from a company laptop, the technology needs to do more than log it. It needs to understand the context.

Here’s what makes insiders tough to spot:

Baseline familiarity: They know what “normal” looks like - and how to blend in.

Access authority: Often, they have legitimate access to sensitive systems.

Low-and-slow tactics: Some insiders exfiltrate data over weeks or months to avoid detection.

Privileged users: IT admins or developers sometimes pose the highest risk — intentionally or not.

That’s the headache. But with AI, you get a chance to make sense of the chaos before it becomes costly.

AI as Your Digital Behavioral Analyst

So how does AI help here?

Think of AI as your behavioral analyst, pulling 12-hour shifts without a break. It doesn’t get tired, distracted, or emotionally attached to the people it monitors. It crunches logs, analyzes workflows, and spots things the human eye might gloss over.

But not all AI is made equal.

The most effective systems combine machine learning, user and entity behavior analytics (UEBA), and natural language processing (NLP) to paint a real-time picture of what's happening across your infrastructure.

Here’s where AI shines in insider threat detection:

1. Context-Aware Anomaly Detection

Instead of throwing up red flags every time someone works late, AI compares current behavior against a tailored user profile. If a junior analyst suddenly accesses executive reports or tries to copy hundreds of files to a USB, that’s not normal. AI knows. And it escalates accordingly.

2. Privileged Access Monitoring

You trust your admins. But trust isn’t a security strategy. AI tools like Varonis, Microsoft Defender for Endpoint, and Exabeam track how privileged accounts behave, when they escalate rights, and whether they're accessing systems outside their scope.

3. Natural Language Processing (NLP) for Communication Monitoring

NLP models can sift through internal comms - think Slack, email, Jira tickets - to identify policy violations, emotional distress signals, or disgruntled language patterns. One outburst won’t trigger an alarm. But a pattern might.

4. Insider Threat Scoring

AI assigns risk scores based on activity, intent signals, and historical data. These scores evolve dynamically, helping security teams prioritize investigations based on real risk, not noise.

Real Talk: This Isn’t Plug-and-Play

AI isn’t a silver bullet. It’s not some plug-it-in-and-forget-it solution. You’ll need the right data, clean logs, tuned models, and ongoing feedback loops. Without that, you're flying blind - just with fancier dashboards. Also, the ethical questions are real.

Monitoring employees' digital behavior walks a fine line between protection and surveillance. Overreach could backfire, killing morale or even exposing you to legal risk. Transparency and governance matter. A lot! So, what does responsible deployment look like?

Building a Responsible AI Insider Threat Program

Deploying AI for insider threat detection should follow a structured, intentional path. Here’s a quick playbook - not a checklist, but a compass:

1. Define Insider Risk Categories

Are you focusing on negligent insiders, malicious actors, or both? Different behaviors require different models.

2. Limit the Scope of Monitoring

Don’t monitor everything and everyone indiscriminately. Focus on high-value targets, privileged access groups, and sensitive data zones.

3. Build Transparent Policies

Let employees know what’s being monitored and why. Transparency builds trust - and can act as a deterrent.

4. Audit the AI

Regularly test the system. Are false positives clogging your queues? Are legitimate threats slipping through? You’ll only know if you pressure-test it.

5. Establish Response Protocols

AI flags the risk. What happens next? Define clear workflows for alert escalation, user communication, and potential disciplinary actions.

Case Study: What Happens When AI Gets It Right

A mid-sized financial firm noticed a quiet uptick in suspicious login activity from a software engineer’s credentials. Nothing glaring - just slightly off-hours access and more frequent interactions with the customer data lake. Traditional security tools didn’t flinch.

But the AI-enhanced UEBA tool in place flagged it. Not because of volume, but because it correlated login behavior with Git commit patterns, access tokens, and a few deleted Slack threads.

After investigation, the team found the employee was funneling client insights to a side project with a competitor. Quiet, calculated, and potentially disastrous. The incident was contained. No breach headlines. No regulatory fine.

That’s the thing about insider threats: when it works, you won’t read about it.

Visualizing the Threat

Let’s put some numbers to the story:

Insider Threat Incident Types (based on Ponemon Institute data)

Incident Type | Frequency | Average Cost per Incident |

Negligent Insider | 62% | $307,000 |

Criminal Insider | 14% | $756,000 |

Credential Thief | 23% | $871,000 |

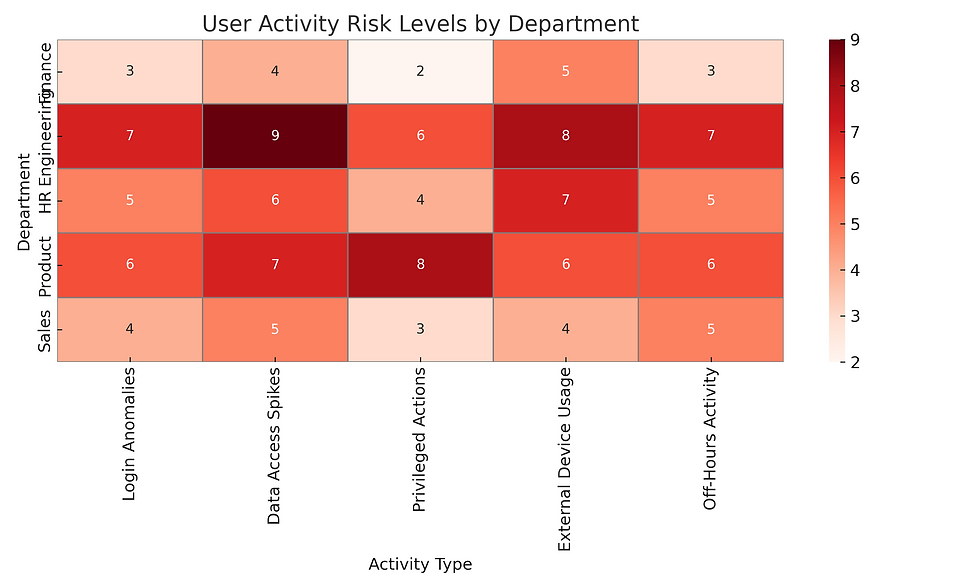

And here’s a basic behavioral anomaly heatmap - something an AI system might generate for high-risk user activities across departments:

High activity in finance and product engineering? Might warrant a closer look.

Timing Is Everything

With insiders, speed matters. The longer it takes to detect malicious or risky behavior, the greater the potential fallout.

According to IBM’s 2025 Cost of a Data Breach Report, breaches involving insiders take an average of 85 days to contain compared to 55 days for external attacks.

Why the gap?

Because you’re dealing with trust. It delays suspicion. Slows response. AI closes that window.

Parting Thoughts (But Not a Wrap-Up)

Here’s the thing. You’ve got the tech. You’ve got the people. And you’re probably doing all the right things externally. But if you’re not watching the inside - if your AI systems aren't tuned to detect when “normal” turns into “not quite right” - you’re gambling with risk you can’t see. It doesn’t take paranoia. It takes preparation. AI doesn’t replace vigilance. It amplifies it. And in a time where trust is fragile and data moves faster than reason, that might be the best edge you’ve got.

Comments